AEO vs GEO: The New Rules of AI Search for 2026

You’re probably seeing the same pattern many marketing teams are seeing now. Rankings look stable. Core pages still hold strong positions. But clicks soften, branded discovery gets murkier, and leadership starts asking why visibility feels weaker even when SEO reports still look healthy.

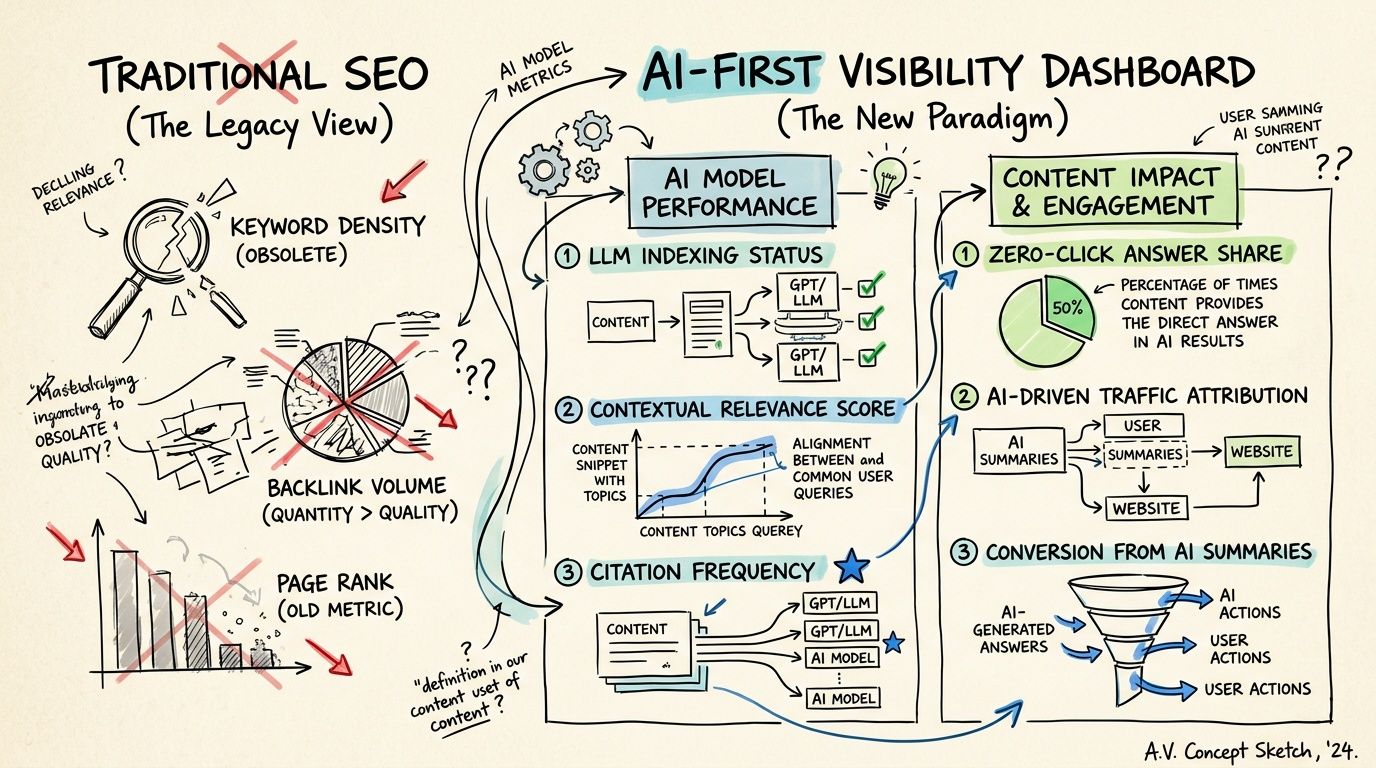

That disconnect is the new search reality. Traditional SEO still matters, but it no longer explains the full path from query to influence. Google can answer the question before the click. ChatGPT, Gemini, Claude, and Perplexity can frame the shortlist before a buyer ever reaches your site. If you only measure rankings, you miss the layer where AI systems summarize, recommend, and quote.

That’s where aeo vs geo becomes a useful distinction. AEO is about winning direct answers in search environments. GEO is about shaping how generative AI systems describe your brand, category, and alternatives. Both approaches are typically necessary. The hard part isn’t learning the acronyms. It’s building workflows, content, and reporting that treat them as separate disciplines with different outcomes.

The New Reality of Search AEO vs GEO

A common scenario now looks like this. Your team publishes solid content, earns rankings, and still watches traffic flatten or fall on pages that used to pull their weight. The issue often isn’t that the page stopped ranking. It’s that the search experience changed around it.

AI-generated summaries now intercept demand before the visit. According to reporting cited by Jasper, AI Overviews reduced click-through rates for top-ranking Google content by 58%, up from 34.5% in the previous year in Ahrefs research, which is why many teams now treat AI answer visibility as a separate operating area from classic rankings (Jasper on GEO and AEO).

That shift changes what “good performance” looks like. A page can rank well and still lose traffic because the answer gets extracted into the interface. It can also influence the buyer more than before, even if fewer sessions reach analytics.

Why rankings alone don’t explain performance anymore

Search used to be easier to interpret. Rank well, earn clicks, improve conversion. Now there are at least two additional questions:

- Was your content used as the answer?

- Was your brand part of the AI-generated comparison set?

Those are not the same thing. The first is mostly an AEO problem. The second is often a GEO problem.

Practical rule: If your team only reports rankings and organic sessions, you’re under-measuring search performance.

The overlap with traditional SEO still matters because strong organic pages often feed AI surfaces. But operationally, marketers now need to optimize for extraction, citation, framing, and brand mention quality. That requires tighter information architecture, cleaner entity signals, and much better monitoring of how AI systems retrieve and present content.

For teams trying to understand the retrieval side of this shift, this guide for search professionals is useful because it grounds AI visibility in how systems find and assemble information, rather than treating AI search like a black box.

Defining AEO and GEO Beyond the Acronyms

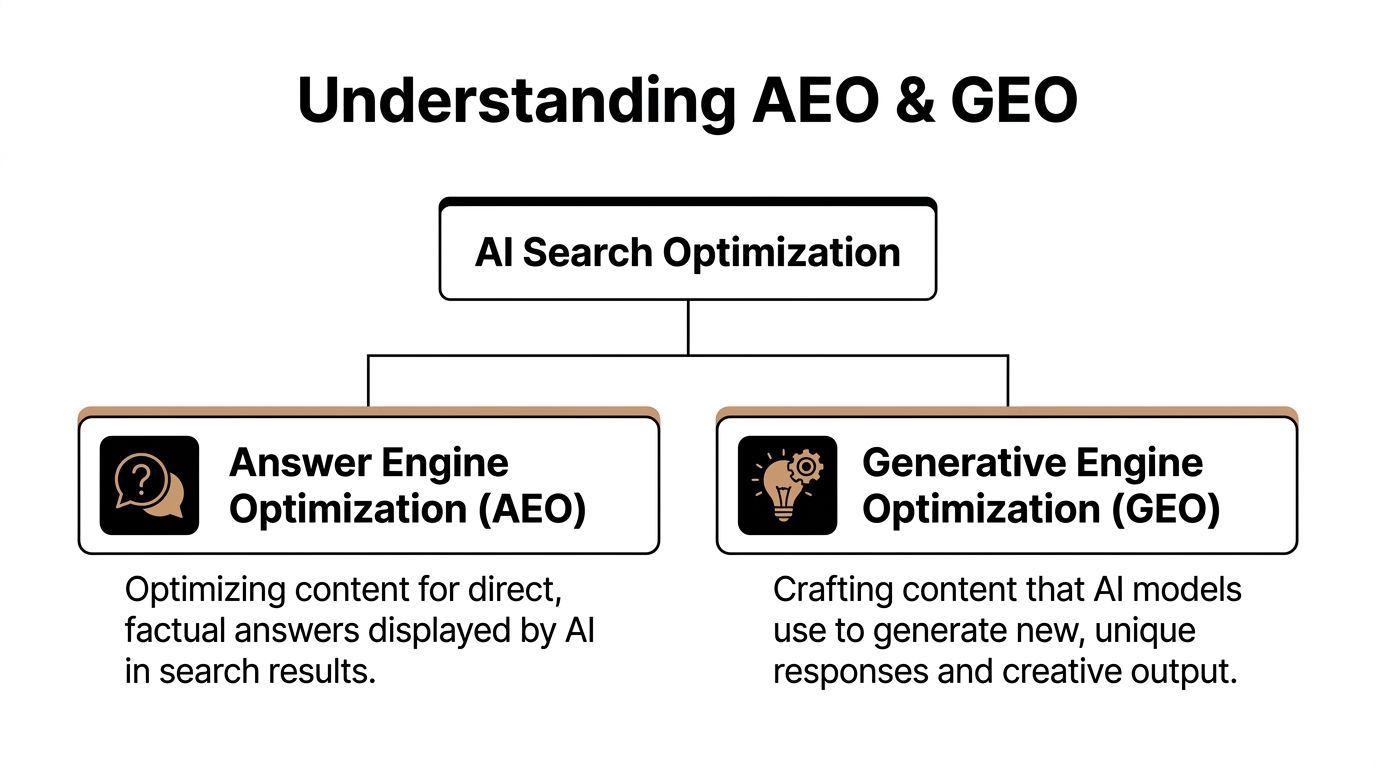

AEO stands for Answer Engine Optimization. GEO stands for Generative Engine Optimization. The names sound adjacent, but they serve different jobs.

AEO is the discipline of making content easy for search engines and answer surfaces to extract as a direct response. GEO is the discipline of making your brand and content more likely to be referenced, cited, and framed favorably inside generated outputs from large language models.

Where each one shows up

The platform distinction matters. Research summarized by Stackmatix describes a clear split: AEO targets search engines like Google and Bing that surface structured answers, while GEO focuses on conversational LLMs such as ChatGPT, Gemini, Claude, and Perplexity (Stackmatix on AEO, SEO, and GEO).

That means your team shouldn’t talk about them as interchangeable.

- AEO lives in answer-first search moments. Think direct definitions, step-by-step questions, FAQs, and search experiences where the user wants a fast factual response.

- GEO lives in synthesis moments. Think “best options,” “compare vendors,” “what should I choose,” or category research where AI creates a recommendation layer.

The user journey is different

AEO usually helps earlier when a person needs clarity. GEO often matters when a person evaluates options and asks the model to narrow the field.

That’s why content strategy diverges:

- AEO rewards clarity, explicit structure, concise answers, and extractable formatting.

- GEO rewards authority, depth, differentiation, quotable points of view, and topic completeness.

If your team wants a simpler primer on the answer-first side, this overview of what Answer Engine Optimization means in practice is a helpful baseline.

For location-driven brands, there’s also a practical local angle. This resource on how to boost local visibility with GEO is worth reviewing because local trust signals and entity consistency affect how generative systems describe nearby businesses.

AEO helps machines lift an answer. GEO helps machines build a narrative that includes you.

Core Differences Goals Tactics and Targets

Most confusion around aeo vs geo disappears once you compare them operationally. The table below is the version I’d use with a marketing team deciding what to do next.

AEO vs GEO At a Glance

| Dimension | AEO (Answer Engine Optimization) | GEO (Generative Engine Optimization) |

|---|---|---|

| Primary goal | Win direct answer visibility | Influence AI-generated summaries and recommendations |

| Main outcome | Your content appears as the answer | Your brand appears in the model’s framing, citations, or comparisons |

| Typical platforms | Google, Bing, voice assistants, answer surfaces | ChatGPT, Gemini, Claude, Perplexity |

| Best query types | Clear questions, definitions, how-to searches, factual prompts | Comparative research, vendor evaluation, category exploration |

| Content format | FAQ blocks, concise definitions, structured headings, short answer sections | In-depth guides, original perspectives, category pages, expert commentary |

| Technical emphasis | Structured data, clean heading hierarchy, extractable paragraphs | Entity consistency, source credibility, topical depth, citation-worthy passages |

| Editorial style | Direct, precise, answer-first | Nuanced, authoritative, synthesis-friendly |

| Success signal | Snippet wins, answer visibility, AI Overview presence | Brand mentions, co-citations, sentiment, recommendation presence |

| Common failure mode | Over-optimizing short answers with little supporting depth | Publishing long-form thought leadership that lacks clarity and retrieval cues |

What teams actually do differently

In practice, AEO work often looks like content refinement. Teams rewrite intros, add direct answers below H2s, tighten definitions, clean up FAQ sections, and make key passages easy to extract. It’s closer to on-page search optimization, even though the output appears in AI-assisted interfaces.

GEO work is broader. It reaches into brand, PR, content strategy, and category positioning. Teams build stronger topic clusters, publish sharper comparison pages, create opinionated expert content, align messaging across third-party sources, and make sure the web says the same thing about the company wherever models may retrieve it.

If your team needs a dedicated explainer on the generative side, this article on what Generative Engine Optimization includes gives the category a clean definition.

What works and what doesn’t

AEO usually works best when content answers one question cleanly before expanding. It tends to fail when pages bury the answer under brand copy or force users through vague introductions.

GEO works when the brand has a clear, repeatable point of view that appears across its own site and trusted external sources. It fails when a company sounds generic, says different things in different places, or publishes content that is polished but not quotable.

The biggest tactical mistake is assuming the same page template can do both jobs equally well.

Measuring Success The AEO vs GEO Scorecard

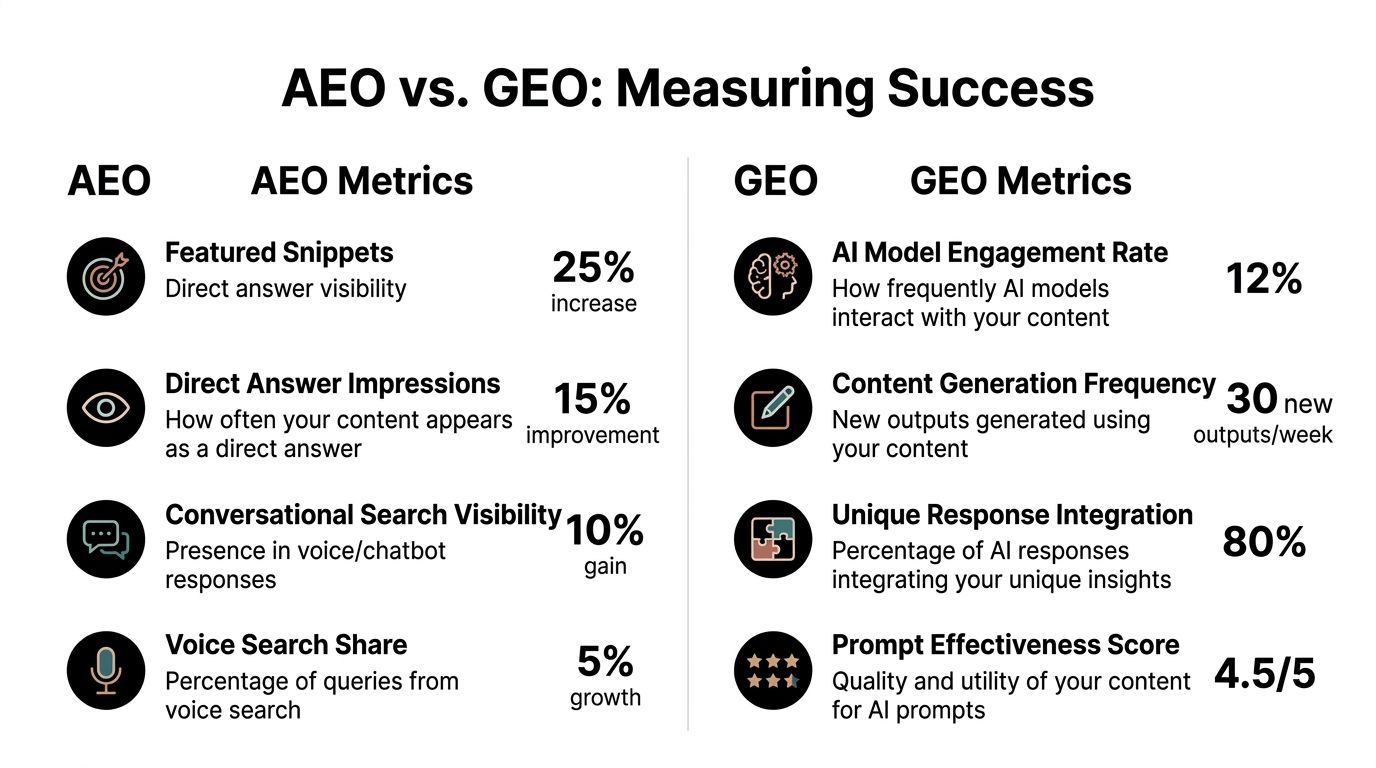

Measurement is where teams frequently lag. They discuss AI visibility in abstract terms, but they don’t build a scorecard that separates answer performance from brand influence.

That separation matters. Similarweb’s summary of AEO and GEO measurement frames it well: AEO excels in SERP-based visibility metrics such as featured snippet count and AI Overview citations, while GEO prioritizes brand-level metrics including brand visibility score, brand mention share, and sentiment distribution (Similarweb on AEO vs GEO).

AEO metrics to put on a dashboard

For AEO, the reporting layer should stay close to search result behavior.

- Featured snippet presence: Did your page win answer-style real estate for core questions?

- People Also Ask appearances: Are you showing up where searchers branch into related questions?

- AI Overview citations: Does Google pull your domain into summary experiences?

- Zero-click visibility patterns: Are impressions growing even when sessions don’t?

This isn’t just SEO with new labels. A page that ranks third but consistently feeds answer surfaces can have strategic value that basic traffic reporting hides.

GEO metrics that reflect influence

GEO needs a different lens. You’re measuring whether AI systems include your brand, how they describe it, and how often competitors displace you in recommendation flows.

Useful GEO reporting includes:

- Brand mention share: How often your brand appears relative to category competitors

- Citation frequency: Whether models repeatedly rely on your site or third-party references about you

- Sentiment distribution: Whether the model’s language is favorable, neutral, or problematic

- Co-citation patterns: Which brands show up beside you, because that often reveals the competitive set AI is constructing

- Prompt coverage: Whether you appear across high-value prompts, not just one or two obvious ones

For broader search reporting context, this resource on SEO visibility and search metrics is a useful complement because it helps teams connect classic search measurement with newer AI-facing visibility signals.

The scorecard mistake to avoid

Many teams still force GEO into a traffic-only model. That’s too narrow. A buyer can ask ChatGPT for the best tools in a category, see your brand positioned well, and convert later through another channel. The influence happened even if the click path didn’t.

If AEO tells you whether you won the answer, GEO tells you whether you shaped the shortlist.

Decision Framework When to Prioritize AEO or GEO

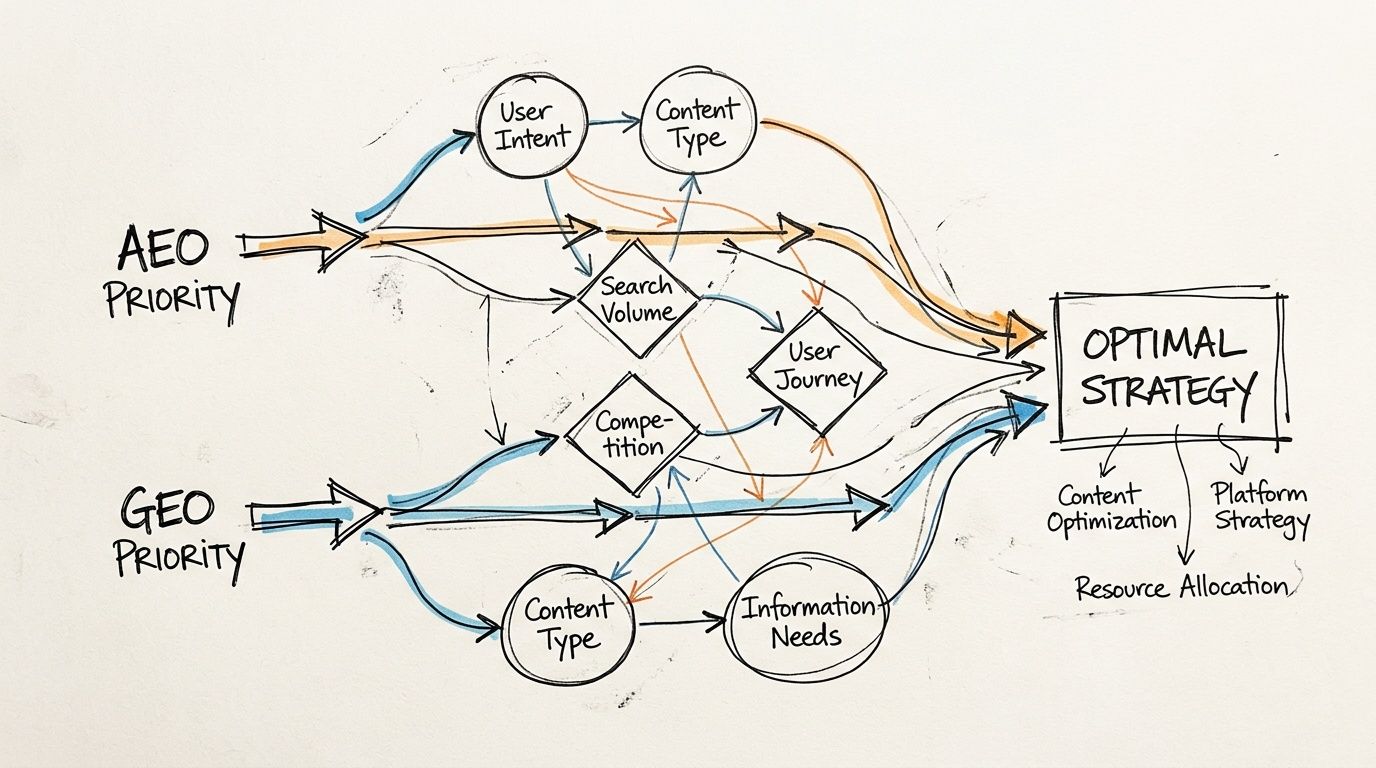

The right priority depends on business goals, content maturity, and how buyers search in your category. You don’t need a philosophical stance on aeo vs geo. You need a sequencing decision.

HubSpot’s benchmark summary makes the split practical: AEO shows stronger performance for discovery queries, including 70%+ coverage in featured snippets for question-driven searches, while GEO has an edge in AI recommendation layers through higher citation share in ChatGPT and Claude summaries (HubSpot on AEO vs GEO strategy).

Prioritize AEO when the buyer asks direct questions

Start with AEO if your growth depends on capturing high-intent informational demand. This is common for SaaS onboarding queries, healthcare explainers, finance definitions, product education, and support-adjacent content.

AEO deserves first attention when:

- Your best opportunities are question-led searches such as “how to,” “what is,” or “why does.”

- Your site already ranks but underperforms on clicks, suggesting the interface may be answering before the visit.

- Your content is useful but poorly structured, which usually means extractability is the issue, not authority.

In those environments, answer formatting can improve visibility faster than a broad authority campaign.

Prioritize GEO when AI recommendation framing matters

GEO should move to the front when your buyer journey involves comparison, trust, and category framing. B2B software, agencies, services, and premium consumer products often fall into this bucket.

Choose GEO first when:

- Buyers ask models for the best options, alternatives, or comparisons.

- Your brand is often absent from AI-generated shortlists, even though humans in the market know you.

- Your category needs explanation and differentiation, which means simple FAQ formatting won’t be enough.

The workflow below is useful if your team needs a visual way to discuss that split.

When a hybrid approach is the better answer

In most mature programs, the practical answer is both. But “both” doesn’t mean equal investment at the same time.

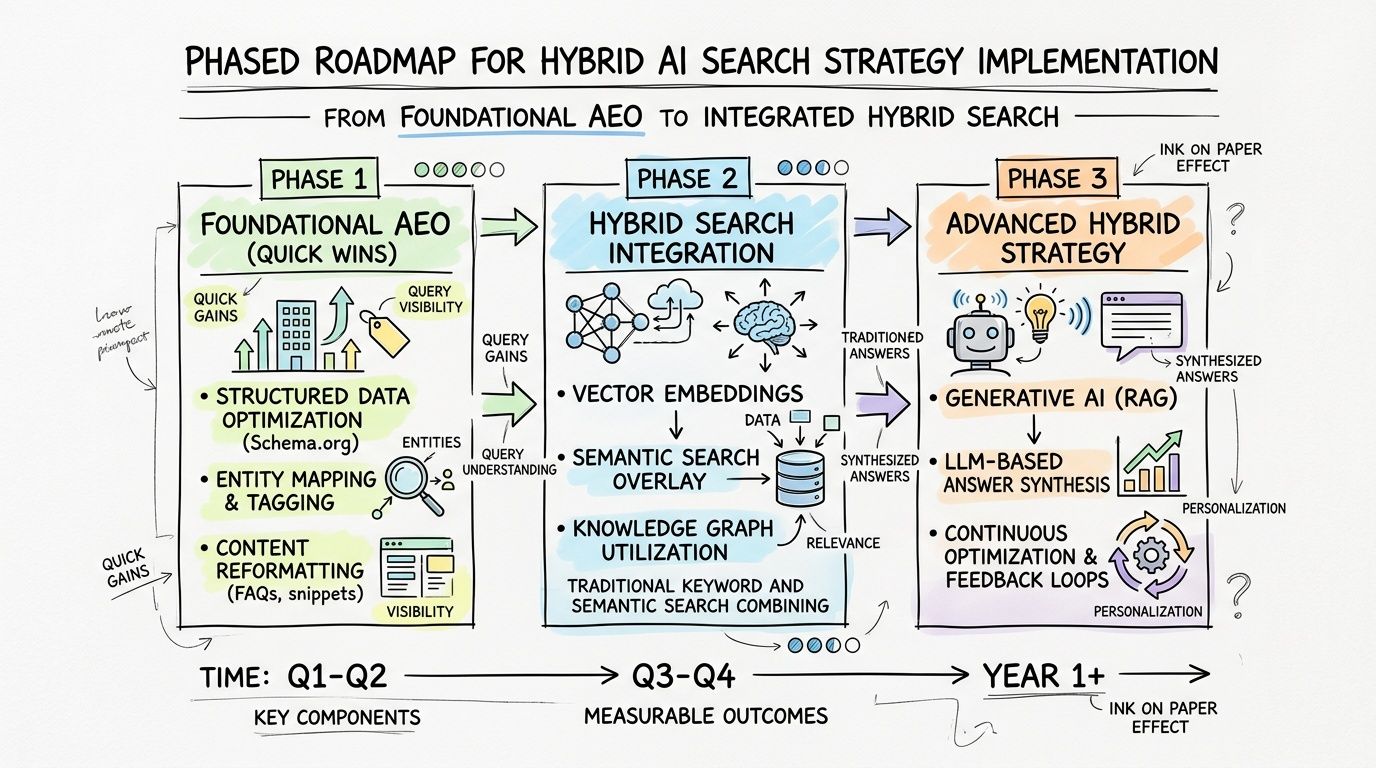

A good sequence is often:

- Fix answer visibility first on pages already close to winning search surfaces.

- Build generative influence next with stronger topic depth, sharper category positioning, and off-site consistency.

- Review overlap areas where one asset can support both jobs with better structure and richer substance.

Teams that start with everything at once usually create noise. Teams that sequence by query type usually create results.

Implementing Your Hybrid AI Search Strategy

A hybrid program works best when you separate quick wins from slower authority-building work. This is also where budgeting gets real. Wellows notes a persistent market gap in ROI comparison and cites examples where AEO schema tweaks might cost $5K while GEO entity-building campaigns may reach $50K annually, without clear cross-market ROI benchmarks (Wellows on AEO vs GEO costs and gaps).

That doesn’t mean GEO is too expensive or AEO is cheap in every case. It means teams need a phased plan instead of treating both as one line item.

Phase one clean up answer extraction

Start with your existing content inventory. Find pages that already rank, answer common questions, or support buyer education.

The most practical first actions are:

- Rewrite key sections for direct answers: Put a clear response immediately under the heading.

- Tighten heading logic: Each H2 should map to a distinct question or subtopic.

- Add or improve FAQ areas: Not as filler, but as real answer blocks tied to search intent.

- Check structured data coverage: Especially where search engines need help understanding page purpose.

These are usually the fastest wins because you’re improving assets that already have some authority.

Phase two build citation-worthy depth

GEO takes longer because it depends on what the broader web says about you, not only what your own site publishes.

Focus on:

- Category pages with differentiated positioning

- Comparison content that doesn’t dodge trade-offs

- Expert commentary and original points of view

- Consistent brand descriptions across site, listings, media mentions, and profiles

This is also the point where content, SEO, PR, and brand teams need to stop operating as separate tracks. AI systems don’t care which department created the source. They synthesize what’s available.

If your team is evaluating stack changes, this roundup of AI search optimization tools is a useful place to compare workflow options.

Phase three review the hybrid layer

Once the basics are in place, audit pages for dual purpose. Some assets should stay tightly answer-focused. Others should stay deep and persuasive. A smaller set can do both if they open with a precise answer and then expand into context, examples, and differentiation.

That’s usually the strongest long-term model.

How to Monitor and Optimize AI Visibility

Traditional SEO tooling won’t fully solve this. It can tell you where you rank and sometimes whether you appear in certain SERP features. It usually won’t tell you how Claude describes your product, whether ChatGPT mentions a competitor more often, or which third-party sources a model appears to rely on when framing your category.

That creates a reporting gap. Teams can see symptoms, but not the underlying AI presentation layer. To optimize properly, you need to track prompts, compare outputs across models, inspect wording, monitor sentiment, and benchmark competitors in the same response environments.

A workable monitoring loop looks like this:

- Track priority prompts by business line: Not just branded prompts, but comparison and category prompts.

- Review verbatim model wording: Small wording shifts often reveal positioning problems.

- Benchmark side by side against named competitors: AI visibility is relative, not absolute.

- Connect source patterns to content actions: If a model keeps relying on weak or outdated descriptions, your team needs to strengthen the source ecosystem.

For teams also building custom AI workflows internally, this guide to fine-tuning LLMs is useful background because it helps explain why model behavior, retrieval, and source quality don’t always line up cleanly.

The operational goal is simple. Stop treating AI visibility as anecdotal. Measure it, inspect it, and adjust content and brand signals based on what the models output.

If your team needs that visibility layer in one place, promptposition helps you monitor how ChatGPT, Claude, Gemini, Perplexity, and other models present your brand, track sentiment and competitor gaps, review verbatim quotes, and turn AI search visibility into something your team can report on and improve.