Perplexity Vs Copilot: AI for Brand Success

A lot of marketing teams are in the same position right now. They ask Perplexity or Copilot about their company, a product line, or a category they dominate, and the answer is off. Maybe the model cites an outdated review, misses a recent launch, or frames a competitor as the category leader when that’s no longer true.

That moment changes the conversation. This stops being a simple AI tooling debate and becomes a brand governance issue. If customers, journalists, analysts, and prospects are using AI interfaces to form first impressions, then perplexity vs copilot is not just a feature comparison. It’s a decision about how your team will research, verify, draft, monitor, and respond when AI systems shape public perception.

The New Battlefield for Brand Reputation

A common failure pattern looks like this. A brand team spends months tightening messaging across the website, newsroom, analyst relations, and review profiles. Then someone runs a prompt in an AI assistant and gets a stitched-together answer that mixes old claims, weak sources, and a competitor mention that shouldn’t be there.

That’s the new reality. Brand reputation no longer lives only in Google rankings, earned media, and your own site. It also lives inside answer engines, workspace assistants, and the source networks those systems trust.

Why this matters to marketing leaders

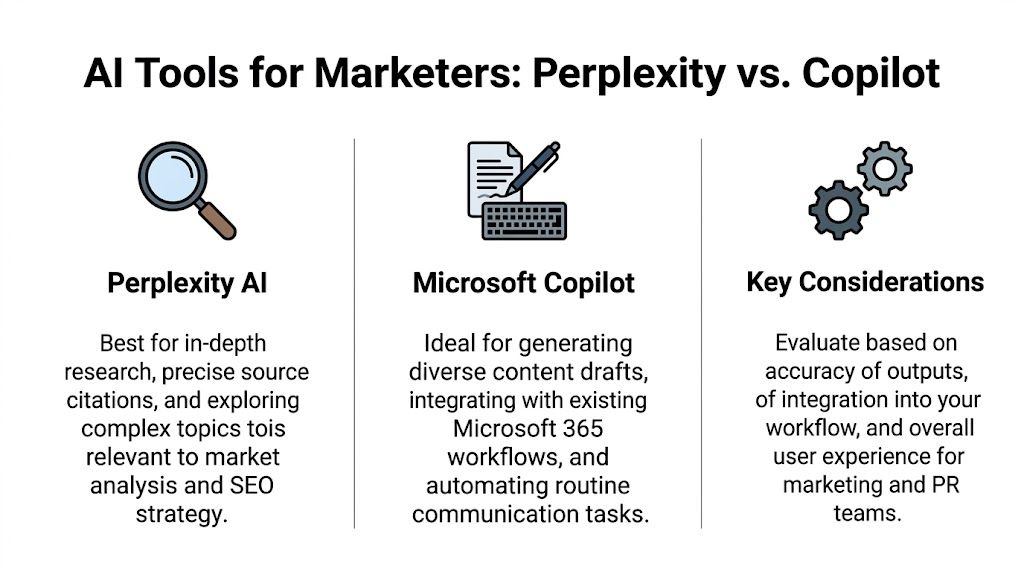

Perplexity and Microsoft Copilot affect different parts of the decision chain. One can shape how external information is gathered and summarized. The other can shape how internal teams package and distribute that information across documents, presentations, and communication flows.

For marketing leaders, that means three things:

- Your brand narrative can drift fast if AI tools rely on outdated or low-authority sources.

- Your team needs source discipline because AI-generated summaries can look polished while still carrying factual risk.

- Your measurement model has to expand beyond classic SEO reporting into AI visibility, sentiment, and source tracking.

This is also where marketing and security start overlapping in practical ways. If your team is evaluating AI tools for research and communications, the governance side matters as much as the output quality. Marketing and Cybersecurity Alignment for Better Brand Value is a useful read because it frames brand protection as an operational issue, not just a messaging issue.

What changes in practice

The shift is simple to describe and harder to manage. You’re no longer optimizing only for a search engine results page. You’re optimizing for systems that synthesize, compress, and reframe information before a human ever clicks a source.

Practical rule: If an AI model can answer a category question without sending a user to your website, your brand team needs a process for checking those answers routinely.

If you’re already tracking how models describe your company over time, it helps to look at AI output the same way you’d track media coverage or review sentiment. This overview of brand sentiment in AI search is useful for that framing because it ties wording, source selection, and competitive positioning together.

Answer Engine vs Productivity Assistant

Before comparing features, it helps to separate the two products by purpose. Perplexity is an answer engine. Copilot is a productivity assistant. That difference explains most of the trade-offs marketing teams run into.

| Criteria | Perplexity | Microsoft Copilot |

|---|---|---|

| Core role | Answer engine for research | Productivity assistant inside Microsoft workflows |

| Best use | Market intelligence, source verification, external topic synthesis | Drafting, summarizing, internal reporting, communication support |

| Source behavior | Citation-first, web-oriented | Workflow-oriented, less centered on open-web citation depth |

| Marketing strength | Fast competitive research and source checking | Faster execution inside Word, Excel, PowerPoint, Teams, Outlook |

| Main limitation | Requires careful governance for sensitive workflows | Can feel generic on niche external research |

What Perplexity is built to do

Perplexity is strongest when the job is to gather, verify, and synthesize external information quickly. According to Gmelius’s comparison of AI assistants, Perplexity excels in real-time, citation-first research with verified source synthesis, and it’s optimized for rapid fact verification in market intelligence workflows. The same comparison notes technical differentiators such as unlimited Pro Search, enhanced AI models, file uploads, and API credits, which makes it better suited to source-led research tasks than general office assistance.

For marketers, that matters in practical ways. If you need to check who is being cited around a category trend, pull source-backed summaries on competitors, or test how a launch is reflected across the web, Perplexity is usually closer to the right operating model.

What Copilot is built to do

Copilot is most useful when the work starts after the research. It sits inside Microsoft 365 and supports tasks teams already do in Word, Excel, PowerPoint, Outlook, and Teams. That makes it valuable for turning notes into internal summaries, shaping first drafts, and keeping communications tied to an existing enterprise workflow.

Its strength is less about open-web research velocity and more about execution inside a managed work environment. If a brand team already lives in Microsoft 365, Copilot can reduce friction in the places where handoffs usually slow down.

The mental model that keeps teams from buying the wrong tool

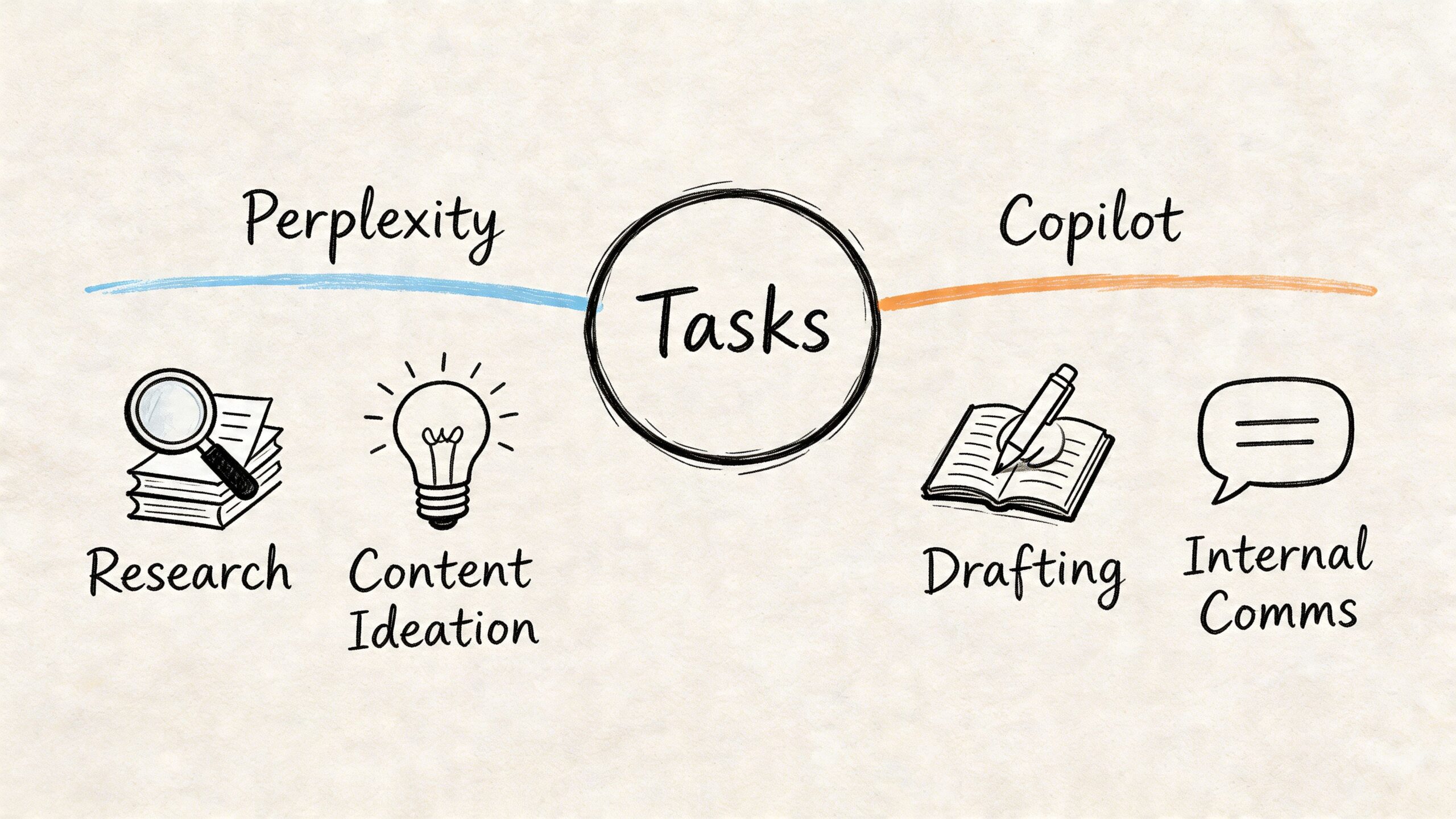

Many teams compare Perplexity and Copilot as if one should replace the other. That usually leads to disappointment. The better question is whether your team’s bottleneck is finding trustworthy external information or operationalizing information inside daily work.

Use this split:

- Choose Perplexity-first when the task starts with uncertainty. You need to ask what’s happening, who’s saying it, what sources are being cited, and where your brand is absent.

- Choose Copilot-first when the task starts with context already in hand. You know what the message is, and you need help drafting, summarizing, formatting, or sharing it consistently.

Teams often fail with Copilot when they expect researcher behavior from a workflow tool. They fail with Perplexity when they expect enterprise-native drafting and governance from a research tool.

If your team is also trying to understand how Perplexity differs from other mainstream AI interfaces, this guide on the difference between Perplexity and ChatGPT gives a useful framing around answer behavior and sourcing expectations.

Comparing Perplexity and Copilot for Marketing Teams

A marketing lead usually feels this decision in two places first. The weekly brand intelligence report, and the moment legal asks where a claim came from.

The useful comparison is not “which AI is better.” It is which tool helps your team detect, verify, and influence brand visibility in AI search without creating review risk or workflow drag. For that job, Perplexity and Copilot serve different parts of the stack.

Answer quality for marketing work

Perplexity is usually the stronger choice for external brand analysis because the sourcing is visible enough to inspect. A strategist can trace a market claim, review the cited pages, and decide whether the answer reflects current coverage, outdated commentary, or a competitor’s stronger footprint.

Copilot is often better once the research phase is over. It helps teams turn approved inputs into drafts, recaps, decks, and internal summaries inside the Microsoft environment many departments already use every day.

That difference matters more than output style.

For brand monitoring, I use a simple test. If the team needs to ask, “Where did this claim come from, and would I show that source to PR or legal?”, Perplexity is usually the better starting point. If the team needs to ask, “Can we turn approved material into a polished internal deliverable fast?”, Copilot usually fits better.

Where each tool helps a marketing department

The trade-off is easiest to see in common team requests:

| Marketing task | Better fit | Why |

|---|---|---|

| Brand mention audit across the visible web | Perplexity | Easier to inspect cited sources and compare narratives |

| Executive brief on a competitor category move | Perplexity | Better for gathering external evidence quickly |

| Drafting a holding statement in Word | Copilot | Stronger fit for document-based drafting and revision |

| Summarizing internal campaign notes | Copilot | Better inside existing meetings, docs, and email workflows |

| Checking whether AI answers reflect current messaging | Perplexity | More useful for source review and answer testing |

| Standardizing messaging across internal files | Copilot | Better for operational consistency inside Microsoft 365 |

This is also where measurement starts to matter. Teams choosing between these tools should not stop at usability feedback. They should track whether branded prompts return accurate brand descriptions, whether citations point to owned or earned media, and whether competitor narratives appear more often than their own. A structured file such as an LLMs.txt implementation for AI crawlers and answer engines can support that work by making your preferred brand context easier to publish and maintain.

Source inspection changes the risk profile

A polished answer is not the same as a dependable one. Brand teams get into trouble when a summary sounds presentation-ready but the underlying sources are weak, stale, or off-message.

Perplexity reduces part of that risk because source inspection is part of the user experience. That does not guarantee accuracy, but it does make review easier. For marketing and PR teams, that is useful in competitor monitoring, issue tracking, analyst brief prep, and any workflow where the evidence matters as much as the wording.

Copilot carries a different advantage. It keeps approved information moving through documents, email, meetings, and slide workflows with less handoff friction. That is valuable, but it does not remove the need for an external validation step when the content involves public claims about markets, competitors, or your own brand reputation.

Privacy and governance in real workflows

The tool choice also changes what your team can safely put into the prompt box.

Perplexity is well suited to public-web research, market scanning, and source discovery. It is a weaker fit for sensitive launch plans, confidential messaging drafts, or internal strategy documents unless your governance policy explicitly permits that use. Copilot often clears internal review more easily because it fits existing Microsoft permissions, document controls, and procurement standards.

A practical operating rule works well here: use Perplexity to investigate the outside world, and use Copilot to work with approved internal material.

The adoption question most teams miss

The harder problem is not feature comparison. It is behavior change.

Perplexity asks marketers to verify sources, compare narratives, and treat AI answers as market signals. Copilot asks them to build AI into everyday production work. If the team needs better brand intelligence, faster citation checks, and a clearer read on how answer engines portray the brand, Perplexity usually creates more strategic value. If the team’s bottleneck is drafting, summarizing, and distributing approved messaging at scale, Copilot usually wins on adoption.

Many departments end up using both. One tool helps them see the reputation problem. The other helps them operationalize the response.

If your team is also reviewing specialized vendors beyond these two categories, these comparisons of various AI SEO providers are useful for market context.

How AI Sourcing Impacts Your Brand Visibility

The sourcing model behind an AI tool directly affects how your brand appears in answers. This is the part many teams miss. They compare interface quality, output tone, or ease of use, but they don’t ask where the answer came from and what that means for brand visibility.

Perplexity reflects your external footprint faster

Perplexity is often more sensitive to the visible web because it is designed around citation-backed retrieval and synthesis. In video-based tests referenced here, Perplexity’s multi-model access produced 20-30% more unique sources per query than Copilot’s single-model reliance, which makes it more useful for spotting source gaps and competitive blind spots. The same breakdown says Perplexity Comet’s news aggregation was 15% faster than Copilot Pro for real-time trend tracking.

For brand teams, that creates both opportunity and risk.

If your PR, newsroom, category pages, comparison pages, listings, and third-party mentions are strong, Perplexity can reflect that strength quickly. If the web is messy, contradictory, or outdated, it can also surface that mess quickly.

Copilot shapes visibility differently

Copilot matters in a different way. It may not be your best tool for open-web source discovery, but it can still influence how internal teams understand the brand, summarize competitors, and circulate narratives inside the organization. That matters because many external outputs start as internal drafts.

A weak external research layer can create flawed inputs. A strong drafting layer can then spread those flaws efficiently.

What brand teams should actually measure

AI search optimization becomes operational. You don’t need to obsess over every answer. You do need a repeatable measurement process.

Use a simple review model:

Check answer presence

Does the model mention your brand for your core category prompts?Inspect source selection

Which sites, articles, and listings are driving the answer?Review wording

Is the model describing your company accurately, favorably, and consistently?Benchmark competitor inclusion

Are rivals appearing in prompts where you should lead?Track volatility over time

Are answers stabilizing, drifting, or changing after PR and content updates?

Your AI visibility is not just whether the model knows you exist. It’s whether the model chooses the right sources when it explains what you do.

If you’re building a process around source control and machine-readable visibility, it’s worth understanding llms.txt and why it matters for AI discovery. It won’t solve every sourcing issue, but it helps teams think more deliberately about how they present important information to AI systems.

Actionable Use Cases for Marketing and PR

A brand team gets the most value from these tools when it assigns them by job, not by hype. The key question is not which model sounds smarter in a demo. It is which one helps the team spot brand risk, shape narrative coverage, and track whether AI answers are improving after PR and content changes.

Competitor intelligence

A product marketing lead needs a same-day readout on a rival's new positioning, recent media pickup, and category framing. Perplexity usually fits that task better because the team can inspect the sources behind the summary and trace where a narrative is gaining traction.

That matters for brand visibility work. If a competitor keeps appearing in AI answers, the immediate question is not only what they are saying. It is which publishers, review sites, and supporting pages are teaching the model to mention them.

Use Perplexity to:

- Build a current market brief from live web coverage

- Check whether a competitor is owning a narrative across reviews, press, and listicles

- Pressure-test claims before they reach executives

- Spot source patterns that may be influencing AI search visibility

Then switch tools. Copilot is better for turning validated findings into a leadership memo, a presentation draft, or internal talking points inside Microsoft 365.

Content strategy and briefing

Content teams often need to answer a harder question than "what should we write?" They need to know which third-party sources are shaping AI descriptions of their category, and where their brand is absent or mischaracterized.

Perplexity is useful at the research stage because it helps teams gather that outside context quickly. A practical workflow looks like this:

- Ask Perplexity how a category, problem, or vendor set is currently framed

- Review the cited domains and repeated narratives

- Flag competitor pages, analyst mentions, and forums that show up often

- Convert those findings into a content brief built to influence future AI sourcing

- Move the brief into Word or PowerPoint, then use Copilot to draft the internal version stakeholders will approve

If your team wants a repeatable process for that review cycle, use LLM monitoring tools for tracking AI brand visibility alongside prompt testing. That gives marketing and PR teams a way to measure whether updated messaging changes brand inclusion, source mix, and answer phrasing over time.

Internal PR and communications

Copilot is usually the better choice for internal communications work. PR and comms teams spend a lot of time turning approved information into usable documents. Board updates, all-hands summaries, media prep notes, launch recaps, and response drafts all benefit from working inside the Microsoft stack where version history, formatting, and existing files are already in place.

Citation depth matters less here. Consistency matters more.

A short walkthrough helps:

Campaign ideation and message testing

Perplexity is stronger for early message development when the team needs outside input. It helps marketers compare a proposed angle against current category language, recent coverage, and competitor claims instead of generating ideas in a vacuum.

Copilot becomes useful after the message is chosen. That is where it helps teams produce the operational assets that follow a campaign decision, including emails, meeting notes, internal summaries, launch documents, and executive recaps.

The practical split is straightforward. Use Perplexity to understand how the market is being described. Use Copilot to distribute a clear version of your response across the organization.

Field observation: Teams get better results when they treat AI search research and internal communication as separate workflows, then measure whether that split improves brand presence in external answers.

Evaluating Enterprise Readiness and Security

A lot of teams choose AI tools based on output quality alone. That’s a mistake in a mid-market or enterprise setting. Brand, PR, product marketing, and communications teams handle material that can affect revenue, legal exposure, and reputation. The tool that gives the best answer isn’t automatically the tool your organization should trust most broadly.

Where Copilot has the governance advantage

According to Perplexity’s enterprise comparison with Copilot, Copilot integrates IAM and cybersecurity features natively, using opt-in permissions and business-configurable policies for safer control. That matters when teams are handling internal documents, approvals, customer-sensitive information, or regional brand messaging that shouldn’t move loosely across tools.

For organizations already deep in Microsoft 365, this governance model is a major reason Copilot gets approved faster. Identity, access, document permissions, and policy controls are already part of the operating environment.

Where Perplexity needs more caution

Perplexity’s research-first design is attractive because it helps teams move quickly. But speed changes the risk profile. If users start treating it as a workspace for confidential planning, the organization can create a blind spot.

The same enterprise comparison highlights a broader trade-off. Perplexity emphasizes citation-first accuracy for research, while Copilot’s structure gives security and IT teams more familiar levers for control. That doesn’t mean Perplexity is a poor enterprise choice. It means marketing leaders need a sharper usage policy.

Use practical guardrails:

- Define approved inputs so teams know what public and non-public material can enter each tool.

- Separate research from approvals so external synthesis doesn’t become the place where final messaging lives.

- Bring IT and security in early when evaluating AI for PR, brand, and executive communications.

- Audit prompt behavior in the same way you audit other software access patterns.

The question to ask before purchase

Don’t ask only which tool saves time. Ask which tool matches the sensitivity of the work.

If your team mostly needs external market intelligence, Perplexity may offer more value. If your team is drafting from internal materials in a regulated or tightly governed environment, Copilot will often be easier to defend internally.

For teams building a broader oversight process around AI output, this review of LLM monitoring tools for marketers is useful because it frames monitoring as a governance function, not just a reporting function.

Your AI Strategy Choosing a Tool and Taking Control

The practical decision is straightforward.

Choose Perplexity if your team’s main problem is external research. It’s the better fit for competitive intelligence, source verification, trend monitoring, and checking how category narratives are being assembled from the open web.

Choose Copilot if your team’s main problem is execution inside Microsoft 365. It’s the better fit for internal communications, first drafts, summaries, and content packaging where governance and workflow continuity matter more than deep citation behavior.

The better answer for most marketing departments

For many teams, this won’t be an either-or decision. The stronger setup is often:

- Perplexity for research

- Copilot for internal production

- A formal review process for AI-visible brand narratives

That last point matters most. Tool choice helps, but it doesn’t solve the deeper issue. Your brand is already being interpreted by AI systems. If you aren’t monitoring that actively, you’re letting third-party sources, outdated pages, and inconsistent citations define you by default.

What to do next

Start with a small operating model.

- Build a prompt set around your brand, products, executives, and core category terms.

- Run the same prompt set regularly across the AI tools that matter to your buyers.

- Record whether your brand appears, how it is described, and which sources support the answer.

- Flag gaps where competitors appear and you don’t.

- Route findings into PR, content, SEO, and brand teams so they can fix the underlying source issues.

The winning AI strategy isn’t just choosing the best assistant. It’s building a feedback loop between AI answers and the content, PR, and listings that influence those answers.

That’s how marketing teams move from reacting to AI output to shaping it.

If your team needs a way to measure AI search visibility instead of guessing at it, promptposition is built for that job. It helps marketing and brand teams track how models like Perplexity, Copilot, ChatGPT, Claude, and Gemini describe their company, which sources those models rely on, how sentiment shifts over time, and where competitors are showing up instead. That gives you something more useful than anecdotes. It gives you a repeatable system for managing brand presence in AI search.