Perplexity vs Gemini: Ultimate AI Comparison

A lot of teams are seeing the same pattern now. They ask Perplexity for the top tools in their category and get a clean answer with citations, competitor mentions, and sometimes their own brand in the mix. They ask Gemini a similar question and the brand either moves down the list, shows up more vaguely, or disappears.

That difference is not random. It comes from two very different systems deciding what counts as trustworthy, current, and worth surfacing. For marketers, that changes how brand reputation works in AI search.

The usual perplexity vs gemini comparison focuses on speed, reasoning, or interface. Those matter. But if you manage SEO, PR, content, or category positioning, the more important question is simpler: where does your brand appear, how is it described, and why does one engine trust signals that the other ignores?

Perplexity vs Gemini The New SEO Frontier

Search used to be easier to model. You had rankings, snippets, local packs, and a familiar set of SEO levers. AI search breaks that neat structure.

A brand can perform well in one answer engine and weakly in another because the engines do not build answers the same way. Perplexity leans on real-time web indexing and explicit citations, including a reported 93.9% factual accuracy via RAG in the comparison summary at GLB GPT's Perplexity vs Gemini analysis. Gemini leans more heavily on Google's ecosystem and knowledge graph, which often gives it stronger context around established entities but can leave newer brands with less visibility.

That split creates a new SEO problem. It is no longer enough to rank in search results or publish a category page and assume AI systems will pick it up evenly.

Here is the practical consequence.

| Question marketers should ask | Perplexity tends to reward | Gemini tends to reward |

|---|---|---|

| Who gets cited? | Fresh, source-grounded web content | Brands with stronger Google ecosystem signals |

| Who appears fastest after publishing? | Brands with recent citable pages | Brands with established authority patterns |

| Who is easier to audit? | Answers with visible inline citations | Answers that are often more synthesized and less explicit |

| Who may struggle? | Brands with weak third-party coverage | Niche or emerging brands without broad entity strength |

What changes in this new environment is the job description. SEO is still part of it, but AI Search Optimization is a more accurate frame. You are not only trying to rank pages. You are trying to shape how answer engines assemble your brand narrative.

The same brand can look credible, current, and recommended on Perplexity, then look generic or absent on Gemini. That is a design difference, not a reporting error.

For reputation management, this matters because visibility and tone now travel together. If one engine is pulling recent reviews, forum discussions, comparison pages, and product documentation, your live web footprint matters immediately. If the other is drawing more from structured authority signals and deeper synthesis, your longer-term brand architecture matters more.

What Are Perplexity and Gemini

Perplexity and Gemini both answer questions in natural language. That similarity hides the part marketers need to care about most. They are not just two interfaces with slightly different output styles. They behave like different research environments.

Perplexity acts like a live research engine

Perplexity is most useful when you need an answer tied to the current web. It searches, compiles, and cites. That makes it closer to a research assistant than a pure chatbot.

For marketers, that means Perplexity often exposes the exact materials shaping your brand mention. If it recommends your competitor, you can usually inspect the citation trail and see whether it relied on product review sites, comparison posts, Reddit threads, product docs, or industry writeups.

That transparency changes optimization. You can work backward from the answer to the source set.

Teams that already understand answer engine differences from adjacent tools will recognize the pattern. This comparison of the difference between Perplexity and ChatGPT is useful because it shows why citation visibility matters when your goal is not just output quality, but brand traceability.

Gemini acts more like a synthesis layer inside Google’s world

Gemini is broader in scope. It can support ideation, writing, reasoning, multimodal tasks, and workflows connected to Google products. For brand visibility, the important point is that Gemini often feels less like a search-first answer engine and more like an analyst synthesizing from a wider internal context.

That has upside. Gemini often produces more structured, report-like output. It can be better at strategic framing, tradeoff analysis, and combining ideas into a more cohesive response.

It also has a downside for marketers doing reputation work. If the answer is less explicit about where claims come from, diagnosis gets harder. You may know your brand is absent or flattened into generic category language, but not immediately know which external source gap caused it.

The practical mental model

Use this lens:

- Perplexity: fast, current, citable, web-facing

- Gemini: synthesized, contextual, ecosystem-aligned, often less source-explicit

That distinction is why perplexity vs gemini is not a simple quality contest. It is a question of which system reflects your brand’s source footprint more directly, and which system reflects your broader authority posture more strongly.

Core Differences Sourcing Accuracy and Speed

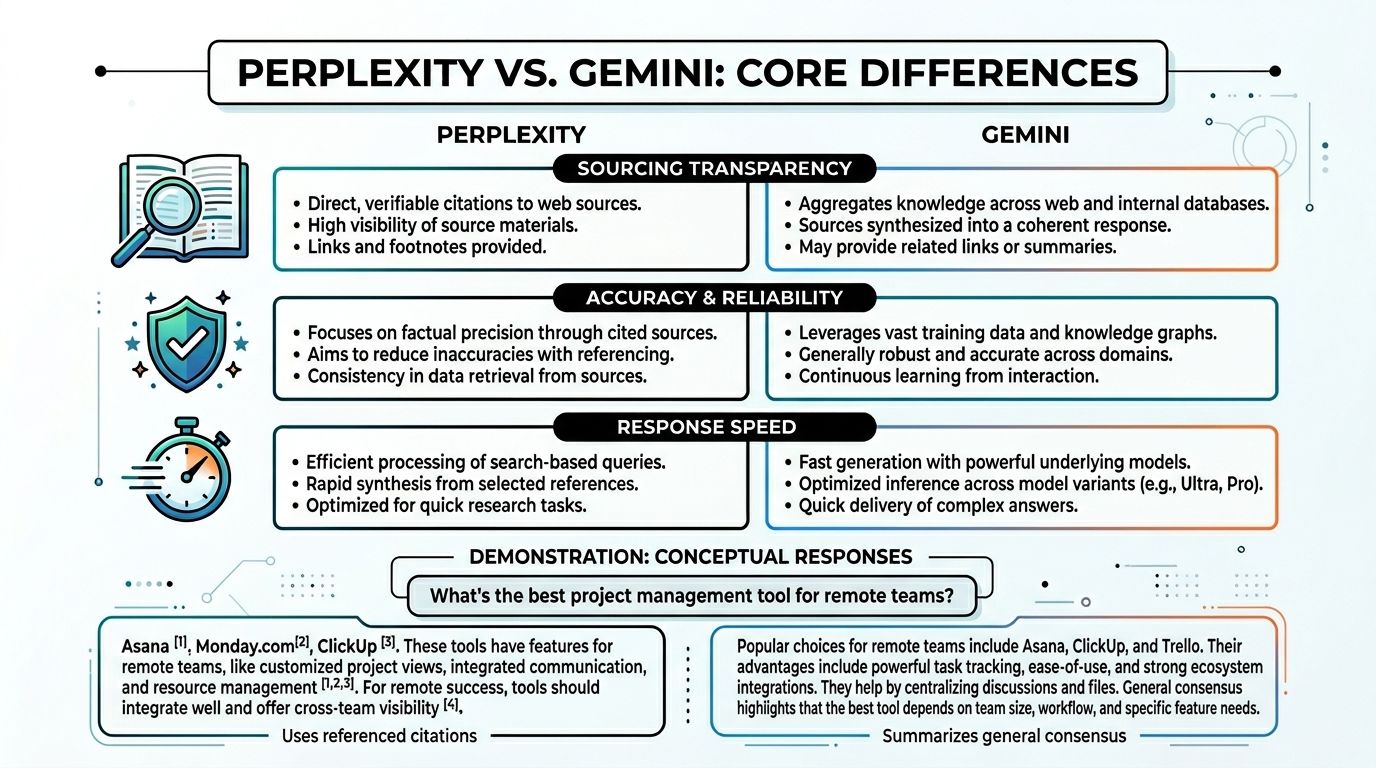

A marketer asks both engines the same question after a rough week of press coverage: “Which vendor is best for remote teams?” One answer shows its receipts. The other gives a cleaner narrative. If your job is brand reputation, that difference changes how fast you can diagnose a visibility problem and how confidently you can fix it.

What happens on a real prompt

Use a practical query: “What’s the best project management tool for remote teams?”

Perplexity usually answers with visible citations tied to live web pages. Those sources often include comparison articles, product pages, forum threads, documentation, and other indexable content you can inspect line by line. That makes it useful for one specific job marketers do every week: tracing why a competitor appeared and why your brand did not.

Gemini usually produces a more polished synthesis. The writing can feel more complete and more strategic, especially for broader planning questions. But lighter source visibility creates a real operational problem. If your brand is missing, generalized, or framed against the wrong competitors, the path back to the source gap is less obvious.

That is the practical split. Perplexity is easier to audit. Gemini is often easier to read.

Speed changes the workflow

Speed matters less for casual use than for active monitoring.

Perplexity tends to return usable answers faster for live research tasks, especially when the prompt depends on current web evidence. That speed helps with fast checks such as competitor scans, launch-day monitoring, citation review, and “why are we absent from this category answer?” investigations.

Gemini is often slower, but the extra time can produce a more developed synthesis. For internal planning, that trade-off can be acceptable. For reputation work, it often is not. A PR lead trying to understand why three publishers and two Reddit threads shaped an answer needs traceability first, polish second.

I would use them differently on purpose:

- Use Perplexity for citation checks, current category prompts, source discovery, and rapid audits of how the web is describing your brand.

- Use Gemini for market framing, synthesis across broader context, and report-style outputs that help teams plan positioning or messaging.

Accuracy is partly about verification

For marketers, accuracy is not only about whether a sentence is technically correct. It is also about whether the answer reflects the current source environment and whether your team can verify the claims behind it.

Perplexity has an advantage when the category is moving fast. If your company just launched a feature, won coverage, published a comparison page, or started showing up in community discussions, Perplexity is more likely to expose that source trail directly. That makes it a stronger diagnostic engine for measuring brand visibility in the open web.

Gemini can produce better synthesis across a wider context, but that strength comes with a trade-off. It may compress category nuance, favor stronger established entities, or smooth over the exact documents that drove the conclusion. For a well-known brand, that can work in your favor. For a challenger brand trying to break into AI recommendations, it can slow recognition.

A useful model here is how query fan-out changes source selection across AI engines. Different systems branch into different retrieval paths before they generate an answer. That is why two engines can answer the same prompt, sound equally confident, and still surface different brands.

| Factor | Perplexity | Gemini |

|---|---|---|

| Sourcing style | Live web retrieval with visible citations | Broader synthesis with stronger Google context |

| Best strength | Verifiable, current answers | Deeper analytical framing |

| Weak point for marketers | Can surface messy public sentiment fast | Can obscure why a brand was included or omitted |

| Good fit | PR monitoring, current comparisons, category checks | Strategic summaries, internal analysis, document-heavy tasks |

A short walkthrough helps.

If your team needs to know what the model can defend with citations right now, Perplexity is usually the better diagnostic tool. If your team needs a more developed market narrative, Gemini is often more useful.

A significant mistake is treating this as a simple quality contest. In brand reputation work, the sourcing model usually predicts the outcome better than the interface does.

Brand Visibility Where You Show Up and Why It Differs

A brand mention in AI search is not one thing. It is the visible result of a hidden sourcing system.

If your team is trying to understand why one engine recommends you and the other does not, stop looking at the answer first. Look at the type of evidence each engine is likely to trust.

Perplexity visibility is built from citable web presence

Perplexity tends to reward brands that publish material the engine can cite and reconcile quickly. That usually includes recent comparison pages, strong editorial reviews, product documentation, active community discussion, and clear landing pages.

A newer company can punch above its size here if it publishes well and gets discussed in places that Perplexity can pull into an answer. A stale enterprise site with weak third-party coverage can lose ground even if the company is well known elsewhere.

This is why AI visibility often looks more agile on Perplexity. Brand presence is closer to your live web footprint.

Gemini visibility is shaped by entity strength and Google alignment

Gemini often behaves differently. Established entities with clearer brand signals across Google’s ecosystem, stronger structured data, and broader authority tend to have an easier time staying visible.

That does not mean content stops mattering. It means content alone may not move the needle as fast if the broader entity picture is weak or fragmented.

For marketers, this creates two separate optimization environments:

- Perplexity environment: current, citable, distributed across the web

- Gemini environment: authoritative, structured, reinforced by long-term ecosystem signals

The result is a split-screen brand reality.

The same brand can be a category leader on Perplexity and nearly invisible on Gemini. This is not a flaw. It is a function of their design.

What tends to work on each platform

A useful way to audit your position is to ask what kind of proof your brand currently generates.

| Visibility driver | More likely to help on Perplexity | More likely to help on Gemini |

|---|---|---|

| Fresh comparison content | Yes | Sometimes |

| Strong Reddit or forum discussion | Often | Less directly |

| Structured brand/entity signals | Helpful | More important |

| Long-standing authority in category | Helpful | Often central |

| Product docs and support content | Often citable | Helpful if they reinforce entity understanding |

Brand teams that track only one engine miss this split. They may think their AI search strategy is working because they appear in one environment, while competitors gain ground in the other.

A broader AI footprint review helps catch that. This guide to AI brand visibility is useful because it frames visibility as a measurable layer above traditional rankings. That is the right lens. You are not only asking whether your site can rank. You are asking whether your brand can be selected, summarized, and recommended.

What does not work

Three habits usually fail.

- Publishing generic “best tools” pages with no original evidence or point of view. These are hard to cite and easy to ignore.

- Treating AI search like prompt engineering. You do not control the user’s phrasing at scale.

- Assuming Google visibility transfers cleanly to Gemini and Perplexity. Sometimes it helps. Often it does not map directly.

The brands that improve fastest are the ones that treat AI answers as outputs of source competition, not magic.

Sentiment Analysis How Each AI Portrays Your Brand

Visibility tells you whether your brand appears. Sentiment tells you whether that appearance helps or hurts. Here, perplexity vs gemini becomes a reputation issue, not just a discovery issue.

Gemini often smooths tone

Gemini tends to be more diplomatically positive or neutral in how it describes brands. It usually avoids sharp negative framing unless the prompt strongly asks for weaknesses, risks, or tradeoffs.

That style can make Gemini look safer from a PR perspective. A brand may not get enthusiastic language, but it often avoids blunt criticism too. For established brands, that can preserve a fairly stable narrative.

The downside is subtle. If Gemini smooths over category differences, it can also flatten your strengths. You appear, but the answer may not clearly explain why your product deserves preference.

Perplexity mirrors source sentiment more directly

Perplexity tends to reflect the tone of the materials it pulls in. If recent reviews are strong, positive language can show up quickly. If there are critical Reddit threads, pricing complaints, or mixed review pages, that texture can surface just as quickly.

That makes Perplexity more useful as an early warning system. It can expose sentiment shifts before slower-moving brand narratives catch up elsewhere.

The content owner’s observation is the practical version of this: a brand can score significantly higher on Gemini and notably lower on Perplexity because each engine weighs sources differently. That example is helpful directionally. The important takeaway is not the exact score gap. It is the cause. Perplexity tends to expose mixed public evidence faster.

What marketers should monitor

Sentiment in AI answers is rarely just “positive” or “negative.” The diagnostic questions are narrower.

- Does the answer mention strengths with conviction?

- Does it pair your brand with caveats immediately?

- Does it quote criticism or summarize it?

- Does it portray you as current, risky, established, niche, expensive, limited, or premium?

Those distinctions matter because users often accept AI phrasing as category shorthand.

If Perplexity starts describing your brand with recurring caveats, do not treat that as an output issue. Treat it as a source issue.

This is why reputation teams need to review the wording itself, not only mention counts. A brand can be visible and still lose because the answer frames it as a secondary option, a niche tool, or a product with “mixed reviews.”

For teams trying to operationalize this, a framework around brand sentiment is useful because it turns vague impressions into reviewable patterns. The goal is not to chase perfect positivity. The goal is to understand how each engine compresses public evidence into a few decisive lines.

What usually changes sentiment fastest

Perplexity and Gemini respond to different levers, but the broad principle is the same. You do not optimize sentiment by trying to game prompts. You optimize it by improving the source material and the brand evidence available to the engine.

That means:

- stronger comparison content

- clearer product positioning

- fewer unresolved public complaints

- more trustworthy third-party coverage

- better consistency between what your site claims and what users say elsewhere

The Marketers Playbook Monitoring and Optimization

The biggest mistake teams make is checking AI search manually once in a while and calling that monitoring. That might catch a dramatic issue. It will not show patterns.

What matters is repeatable measurement.

Track three KPIs first

Start with a small set of metrics your team can use.

Visibility share

How often does your brand appear for core commercial and category prompts compared with named competitors?Sentiment pattern

Not a single score in isolation. Look for recurring language, favorable positioning, and repeated objections.Source quality

What kinds of pages keep influencing the answer? Review sites, your own pages, forums, listicles, analyst content, docs, or outdated articles.

If you cannot answer those three questions across both engines, you do not yet have an AI search strategy. You have spot checks.

Manual checks break down fast

Manual testing sounds fine until the prompt set expands. Then you encounter problems: one teammate uses different wording than another

- results drift over time

- nobody logs exact phrasing

- sentiment changes are noticed too late

- competitor mentions go untracked

- source patterns remain anecdotal

That is why teams move from screenshots to systems.

One option is PromptPosition, which tracks visibility, sentiment, positioning, competitor presence, and underlying sources across models including Perplexity and Gemini. The practical value is not “more dashboards.” It is having side-by-side evidence when the same brand appears differently across engines.

Optimize Perplexity with source readiness

Perplexity responds fastest when the open web gives it something solid to cite.

Focus on assets that are useful in answer assembly:

Comparison pages with substance

Publish category pages and comparisons that explain tradeoffs clearly. Thin pages rarely become durable citations.Documentation that supports claims

If your product is recommended for a use case, docs and help content should validate that use case.Fresh third-party mentions

Encourage coverage in credible publications, software directories, industry blogs, and communities where buyers compare tools.Community cleanup

If negative threads dominate the conversation, Perplexity may surface that tone. The solution is not suppression. It is response, clarification, and better user experience.

Optimize Gemini with entity strength

Gemini usually needs a stronger foundation.

Priorities tend to include:

- Consistent brand facts across the web

- Structured data and clear entity signals

- Authoritative content tied to real expertise

- A stronger Google ecosystem footprint

- Clear category definition on your own site

This work often looks less exciting because it is slower and more architectural. It still matters because Gemini is more likely to reward brands that read as established, coherent entities.

Stop thinking in terms of prompt tuning

The content owner put this well. You are not optimizing the prompt itself, because users phrase things however they want. You are optimizing the materials the engine can use when it answers.

That changes execution.

A good workflow looks like this:

| Step | What to do | Why it matters |

|---|---|---|

| Build a prompt set | Include category, competitor, use-case, and comparison prompts | Visibility varies by intent |

| Review answer language | Save exact wording, not just rankings | Reputation lives in phrasing |

| Audit sources | Identify what pages keep shaping the answer | Source patterns reveal what to fix |

| Publish or repair evidence | Improve pages, docs, PR coverage, and third-party presence | Engines need better material |

| Recheck over time | Watch for movement across both engines | Changes land unevenly |

AI search optimization works when you improve source material and then verify whether the engines changed their portrayal of your brand.

That last step matters most. Teams often publish a strong page and assume the job is done. In reality, one engine may start citing it quickly while the other barely shifts. Without monitoring, you cannot tell whether the work changed perception or just added another unused asset to the site.

Recommendation Which AI Engine Matters More For You

If you run growth for a newer company, fast-moving product, or niche category, Perplexity usually deserves immediate attention. It is more responsive to current web evidence, more transparent about citations, and more likely to reflect fresh category movement.

That makes it especially important for:

- startups entering crowded software categories

- challenger brands trying to displace incumbents

- teams running active PR and content programs

- products with frequent launches or rapid positioning shifts

If you manage an established brand with broad authority, a mature content estate, and strong visibility across Google’s ecosystem, Gemini deserves equal attention for a different reason. It can reflect your entity strength, category legitimacy, and broader trust posture in ways that matter for long-horizon reputation.

That makes Gemini especially important for:

- enterprise brands

- companies with strong branded search demand

- organizations investing heavily in structured authority

- teams using Google Workspace and multimodal workflows internally

But the practical answer is not to pick one.

A brand can gain traction on Perplexity and still lose strategic ground on Gemini. It can also look stable on Gemini while Perplexity starts surfacing criticism from fresh public sources. Both situations matter.

So the right recommendation is simple:

Prioritize Perplexity if you need fast movement. Prioritize Gemini if you need durable authority. Monitor both if you care about actual brand health.

That last part is the only essential point. AI search is already fragmented enough that single-platform visibility gives a false sense of security.

Frequently Asked Questions

Is Perplexity a model or a search layer?

Marketers often find it more useful to think of Perplexity as an answer engine built around live retrieval and citations. The important operational point is that it behaves like a web-connected research system, not just a closed chatbot.

Is Gemini better for general users?

Often, yes, if the goal is synthesis, ideation, writing help, or broader reasoning in a Google-centered workflow. For brand diagnostics, that is not always the deciding factor. Marketers usually need traceability, not just polished output.

Which one is better for newer brands?

Newer or niche brands often have a clearer path into Perplexity because strong recent web content can influence visibility more directly. Gemini may take longer to reflect that progress if the brand lacks broader authority signals.

Can you optimize AI search with prompts alone?

No. You do not control how users ask questions at scale. The reliable lever is the underlying source material that engines use to build answers.

How often should a brand check its AI visibility?

One-off checks are rarely enough. Category narratives, reviews, comparison pages, and competitor mentions change. A regular monitoring rhythm is more useful than occasional spot checks, especially if your category moves quickly or your team runs active PR and content campaigns.

If your team needs a clearer view of how Perplexity, Gemini, and other models describe your brand, promptposition can help you monitor visibility, sentiment, competitor mentions, and source patterns in one place. That makes it easier to see where your brand is strong, where it is missing, and what source-level changes are most likely to improve AI search presence.